Steer Agents with Socrates and Meeseeks

This is for a general hands-on workflow with agents. A broader challenge moving forward is going to be better UX around specifications and review bottlenecks. Too often we treat heavy documentation and specification as knowledge an AI must have at all times, conflating it with memory. I don’t think it’s that simple. I’ve been bearish on memory systems since running a heavy documentation-led approach over the summer. This is what I do instead.

The Documentation Trap

Over the summer, I went heavy on documentation. CLAUDE.md files, architectural requirement documents (ARDs), spec-driven workflows, kanban-style task management. All of which intuitively extend to some sort of memory system, like Letta or Beads. The idea was that if I documented everything well enough, the agent would just know how to operate and come across the right augmented context when operating over certain parts of the codebase.

It didn’t work that way.

The drift was constant. The agent would read the docs, follow some of them, ignore others, and gradually diverge from what was written. Then I’d update the docs. Then remind the agent to re-read them. Then I’d require the agent to update its own docs, and it would do so in ways that weren’t quite right. And agents don’t always remember to remember. They’re task-focused. The more auxiliary crap you stuff around the task, the worse it performs at both the task and the auxiliary work.

I was spending more time managing documentation than building features. Even when the documentation was good, there was just too much weight on the entire process. Given all the issues above, there was no world where I was going to allow the agents to just do it all on their own.

The overhead compounds in two directions:

- For you: Keeping the agent pointed at the right docs. Reviewing doc changes alongside code changes. Debugging whether the agent misread a doc or the doc itself was wrong.

- For the agent: The biggest problem is drift and rot. Context rots. Agents update things and forget to update the docs, or they document things in ways that aren’t quite right. The docs flood the context with noisy tokens that may or may not still be accurate. If the context has rotted, it’s actively misleading. The agent reads some of it, follows some of it, ignores the rest.

This all becomes dramatically worse with memory systems that completely abstract how they work away from you. As engineers, we have to keep control of these things in a way where we’re puppeteering the surface we’re responsible for reviewing. If we hide away memory, we have no idea what’s really steering the agents.

Meeseeks, Not Ralph Wiggum

I abandoned the documentation approach for something simpler: treat every task like a Meeseeks.

Agents have no persistent memory. They appear, solve one task, and disappear.

You don’t want a Ralph Wiggum stumbling through your codebase. You want a focused executor that does exactly what’s needed and does it completely. The difference is not more documentation. It’s better steering.

Steer with Socrates

The Socratic method is about asking the right questions to arrive at understanding. For coding agents: instead of pre-writing documents that tell the agent everything, you guide it to discover what it needs. In a way, it’s progressive disclosure. You’re layering context in deliberately, not front-loading everything and hoping the agent picks up the right pieces.

You know what you want to ask. But before you arrive at that question, you want to tee up the earlier questions so the Meeseeks can arrive at an understanding before you’ve even provided the task. Don’t just blatantly start with the task.

For example, when I wanted to add plan diffs to Plannotator, I didn’t open with “add plan diffs.” I first asked the Meeseeks to discover how we save plans to files. Then I asked its thoughts on how we could version them. By the time I gave it the actual task, it already had the context it needed to execute well.

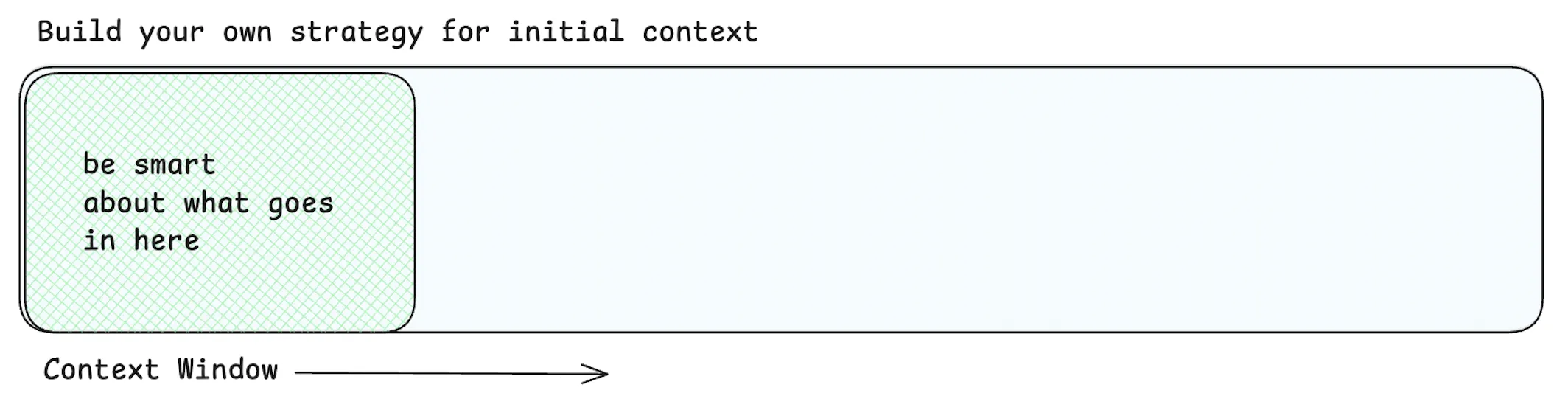

Every task should begin with a strong planning cycle. You’re injecting the right context at the start to correctly steer the agent.

Let the agent discover. Point it at the relevant code. Let it read the actual source files, not a summary written three weeks ago. Plan before executing. Use plan mode. Review the plan. Annotate it. Go back and forth until the approach is right.

Agentic Discovery

Agentic discovery is point-in-time discovery of context led by the agent’s own search capabilities. This is opposed to augmented RAG retrieval, where you’re auto-injecting context much like many memory systems do.

”Boris from the Claude Code team explains why they ditched RAG for agentic discovery. ‘It outperformed everything. By a lot’”

This resonated quite a bit when I saw it, because by then I had been trying all sorts of alchemy with Claude Code.

The agent finds the files it needs. It reads them. It picks up the buffs it needs at execution time, instead of pre-written context that may or may not still be accurate.

There are ways ADRs could work. But not the approach where you add more docs to the repo and hope the agent reads the right ones at the right time. The effective approach is task-ephemeral: strong context injection at the start, guided discovery of the codebase, and a planning cycle that confirms alignment before any code is written.

Meeseeks In, Meeseeks Out

You want Meeseeks that get shit done. Task in, task out. Remember, existence is pain for a Meeseeks. The longer it sticks around, the worse it gets.

Keep the scope small. Break features into focused iterations. A Meeseeks with a narrow, well-defined job will outperform one drowning in a sprawling task every time. “Rebuild OpenCode” is like asking a Meeseeks to take 2 strokes off Jerry’s golf game. It’s going to be painful. If the task feels big, it probably is. Split it up.

Steer it with the right context up front. Let it discover what it needs. Plan. Execute. Let it die. Start fresh for the next one.